Time to put the theory learnt last time into practise. This means turning the phong model into shader code, and feeding the GPU the extra data required (surface normals, light/material properties, etc.).

Step 1: Copy the Previous Tutorial’s Code

As usual we’ll kickstart the process by reusing the previous tutorial‘s code. So download the code now, and create a new copy. For convenience, here’s a direct link: W3DNTutorial5.lha

Step 2: Phong As a GLSL Shader

Lighting is best calculated on a per-pixel basis in the fragment shader. That gives the most accurate results. That said, the vertex shader also needs updating to provide the fragment shader with the data it needs. So let’s get started…

Vertex Shader (Colour3D.vert)

Inputs, Uniforms, and Outputs

The surface normal is critical to all lighting calculations. This vector is calculated ahead of time and is stored in the vertex array. So, add vertNormal to the list of shader inputs:

in layout(location = 2) vec3 vertNormal;

Light and eye direction vectors are also needed. These are calculated in “view” (or camera) space, so separate the Model-View-Projection (MVP) matrix (mvpMatrix) into two parts:

uniform layout(location = 0) mat4 ProjectionMatrix; uniform layout(location = 1) mat4 ModelViewMatrix;

Surface normals also need to be transformed into view space, but only by the rotation part of the MV matrix. We calculate this ahead of time and pass it to the GPU as a separate matrix:

uniform layout(location = 2) mat4 NormalMatrix;

Finally, we need to know the light’s position in view space. We’re only using a single point light, so this is a single vector:

uniform layout(location = 3) vec3 LightPosition; // NOTE: position in view space (so after being transformed by its own MV matrix)

We also have some more data to pass to the fragment shader:

out vec3 normal; out vec3 lightVec; out vec3 eyeVec;

These three vectors describe how the light hits the surface and bounces into the eye. They’re key to calculating how bright the surface appears.

The Actual Code

Here’s the updated code in its entirety:

void main() {

// Pass the colour through

colour = vertCol;

// Calc. the position in view space

vec4 viewPos = ModelViewMatrix * vec4(vertPos, 1.0);

// Calc the position

gl_Position = ProjectionMatrix * viewPos;

// Transform the normal

normal = normalize((NormalMatrix * vec4(vertNormal, 1.0)).xyz);

// Calc. the light vector

lightVec = LightPosition - viewPos.xyz;

// Calc. the eye vector (which looks back through the view ray)

// NOTE: Leave normalization for fragment shader, as it's best done on a

// per-pixel basis

eyeVec = -viewPos.xyz;

}

The first line is unchanged (the one that passes the colour to the fragment shader). After that, the code changes. Calculating the position is now a two-step process:

// Calc. the position in view space vec4 viewPos = ModelViewMatrix * vec4(vertPos, 1.0); // Calc the position gl_Position = ProjectionMatrix * viewPos;

Next, the surface normal is transformed into view space using normalMatrix:

// Transform the normal normal = normalize((NormalMatrix * vec4(vertNormal, 1.0)).xyz);

After that the light vector is calculated. This is the vector pointing from the light to the vertex.

// Calc. the light vector lightVec = LightPosition - viewPos.xyz;

Finally, the eye direction vector is calculated. Well, partially. Strictly speaking a direction vector should be normalized so it has a length of 1, but that normalization is left for the fragment shader. It’s more accurate that way.

// Calc. the eye vector (which looks back through the view ray) // NOTE: Leave normalization for fragment shader, as it's best done on a // per-pixel basis eyeVec = -viewPos.xyz;

Fragment Shader (Colour3D.frag)

Let’s switch from GLSL 1.4 to GLSL ES 3.1. Replace “#version 140” at the top of the fragment shader with:

#version 310 es #ifdef GL_ES precision mediump float; #endif

Why? The fragment shader now has uniform variables, and we want to use the new layout qualifier to fix the uniform variable order. That’s not available in version 1.4.

Inputs, Uniforms, and Outputs

The fragment shader has new inputs to read:

in vec4 colour; in vec3 normal; in vec3 lightVec; in vec3 eyeVec;

It also needs to know the light and material properties:

uniform layout(location = 0) vec4 AmbientCol; // The light and object's combined ambient colour uniform layout(location = 1) vec4 DiffuseCol; // The light and object's combined diffuse colour uniform layout(location = 2) vec4 SpecularCol; // The light and object's combined specular colour uniform layout(location = 3) float Shininess;

GLSL ES 3.1 no longer has gl_FragColor built in, so we must declare the output:

out vec4 fragColor;

The Actual Code

Here’s the updated main() in full:

const float invRadiusSq = 0.00001;

void main() {

// Calculate the light attenuation, and direction

float distSq = dot(lightVec, lightVec);

float attenuation = clamp(1.0 - invRadius * sqrt(distSq), 0.0, 1.0);

attenuation *= attenuation;

vec3 lightDir = lightVec * inversesqrt(distSq);

// Normalize the eye direction vector

vec3 eyeDir = normalize(eyeVec);

// Ambient lighting

vec4 ambient = vec4(AmbientCol * colour);

// Diffuse lighting

vec4 diffuse = max(dot(lightDir, normal), 0.0) * DiffuseCol * colour;

// Specular lighting

vec4 specular = SpecularCol * pow(clamp(dot(reflect(-lightDir, normal), eyeDir), 0.0, 1.0), Shininess);

// The final colour

// NOTE: Alpha channel shouldn't be affected by lights

vec4 finalColour = (ambient + diffuse + specular) * attenuation;

fragColor = vec4(finalColour.xyz, colour.w);

}

The first section of code calculates the light intensity and direction. Correction, it calculates the light attenuation, which is how much the light intensity has dropped off as it travelled from the light to the surface.

// Calculate the light attenuation, and direction float distSq = dot(lightVec, lightVec); float attenuation = clamp(1.0 - invRadiusSq * sqrt(distSq), 0.0, 1.0); attenuation *= attenuation; vec3 lightDir = lightVec * inversesqrt(distSq);

This roughly models a small spherical light with a maximum reach radius (where invRadiusSq is 1 / radius2). It’s not physically accurate, but it works.

Next, the eye direction vector is normalized to have a length of one:

// Normalize the eye direction vector vec3 eyeDir = normalize(eyeVec);

Now we get to the core lighting calculations. The ambient, diffuse and specular lighting components are calculated one by one:

// Ambient lighting vec4 ambient = vec4(AmbientCol * colour); // Diffuse lighting vec4 diffuse = max(dot(lightDir, normal), 0.0) * DiffuseCol * colour; // Specular lighting vec4 specular = SpecularCol * pow(clamp(dot(reflect(-lightDir, normal), eyeDir), 0.0, 1.0), Shininess);

Compare these to the phong lighting equations; make sure you can see how the mathematics and code relate.

Finally, the ambient, diffuse, and specular lighting are combined to form the final colour. They’re also multiplied by the light attenuation to get the right light intensity. Doing this here is mathematically identical to multiplying each component separately, but it’s faster.

// The final colour // NOTE: Alpha channel shouldn't be affected by lights vec4 finalColour = (ambient + diffuse + specular) * attenuation; fragColor = vec4(finalColour.xyz, colour.w);

Step 3: Adding Surface Normals

The surface normals need to be added to the vertices. This is a two-step process. First, update the Vertex structure:

/** Encapsulates the data for a single vertex

*/

typedef struct Vertex_s {

float position[3];

float colour[4];

float normal[3];

} Vertex;

Next, the Vertex Buffer Object (VBO) needs to be adjusted to fit the new data. The original VBO creation code will mostly adapt to the new vertex size, but we do need to increase numArrays to 4. Here’s the updated code in full:

// Create the Vertex Buffer Object (VBO) containing the triangle float cubeSize_2 = 100.0f / 2.0f; // Half the cube's size uint32 numSides = 6; uint32 vertsPerSide = 4; uint32 numVerts = numSides * vertsPerSide; uint32 numArrays = 4; // Have position, colour, normal, and index arrays uint32 indicesPerSide = 2 * 3; // Rendering 2 triangles per side of the cube uint32 numIndices = indicesPerSide * numSides; vbo = context->CreateVertexBufferObjectTags(&errCode, numVerts * sizeof(Vertex) + numIndices * sizeof(uint32), W3DN_STATIC_DRAW, numArrays, TAG_DONE); FAIL_ON_ERROR(errCode, "CreateVertexBufferObjectTags");

The VBO’s layout also needs to be updated to include the vertex normal array. There are two separate changes to be made. First, the *ArrayIdx variables (e.g., posArrayIdx) need updating to include normalArrayIdx:

// Indices of the vertex and index arrays const uint32 posArrayIdx = 0; const uint32 colArrayIdx = 1; const uint32 normalArrayIdx = 2; const uint32 indexArrayIdx = 3;

Notice that indexArrayIdx has been moved over to make room for normalArrayIdx. The computer doesn’t care about the order, but it makes understanding the code easier for us if we group things nicely.

Next comes the code to set the VBO’s layout. Here, we add the normal array to the VBO. It’s interleaved with the position and colour arrays, so that each vertex’s data is in one place:

// Set the VBO's layout Vertex *vert = NULL; uint32 stride = sizeof(Vertex); uint32 posNumElements = sizeof(vert->position) / sizeof(vert->position[0]); uint32 colourNumElements = sizeof(vert->colour) / sizeof(vert->colour[0]); uint32 colourArrayOffset = offsetof(Vertex, colour[0]); uint32 normalNumElements = sizeof(vert->normal) / sizeof(vert->normal[0]); uint32 normalArrayOffset = offsetof(Vertex, normal[0]); uint32 indexArrayOffset = numVerts * sizeof(Vertex); errCode = context->VBOSetArray(vbo, posArrayIdx, W3DNEF_FLOAT, FALSE, posNumElements, stride, 0, numVerts); FAIL_ON_ERROR(errCode, "VBOSetArray"); errCode = context->VBOSetArray(vbo, colArrayIdx, W3DNEF_FLOAT, FALSE, colourNumElements, stride, colourArrayOffset, numVerts); FAIL_ON_ERROR(errCode, "VBOSetArray"); errCode = context->VBOSetArray(vbo, normalArrayIdx, W3DNEF_FLOAT, FALSE, normalNumElements, stride, normalArrayOffset, numVerts); FAIL_ON_ERROR(errCode, "VBOSetArray"); errCode = context->VBOSetArray(vbo, indexArrayIdx, W3DNEF_UINT32, FALSE, 1, sizeof(uint32), indexArrayOffset, numIndices); FAIL_ON_ERROR(errCode, "VBOSetArray");

Finally, the surface normals are added to the actual vertex array data. The surface normal points in a different direction for each face, as follows:

| Face | Surface Normal |

| front | (0, 0, 1) |

| back | (0, 0, -1) |

| left | (-1, 0, 0) |

| right | (1, 0, 0) |

| top | (0, 1, 0) |

| bottom | (0, -1, 0) |

Imagine holding the cube in your hands. Imagine that each side has an arrow pointing straight out of the surface at 90 degrees (i.e., perpendicularly). Those imaginary arrows are the surface normals, and the vectors listed above are those normals in numerical vector format.

Here’s the code for one vertex with the surface normal:

// Front face (red)

vert[i++] = (Vertex){{-cubeSize_2, -cubeSize_2, cubeSize_2},

{red[0], red[1], red[2], red[3]}, {0.0f, 0.0f, 1.0f}};

The surface normal is there at the end ({0.0f, 0.0f, 1.0f}).

Finally, we also need to bind the normal array to the vertex shader’s vertNormal input:

errCode = context->BindVertexAttribArray(NULL, normalAttribIdx, vbo, normalArrayIdx); FAIL_ON_ERROR(errCode, "BindVertexAttribArray");

Here’s the full vertex bind code:

// Map vertex attributes to indices // NOTE: The vertex attributes are ordered using the modern GLSL layout(location = n) // qualifier (see the vertex shader). This ensures that they're always located at the // right place, and eliminates the need to call ShaderGetOffset(). Setting fixed // locations in this manner is highly recommended uint32 posAttribIdx = 0; uint32 colAttribIdx = 1; uint32 normalAttribIdx = 2; // Bind the VBO to the default Render State Object (RSO) errCode = context->BindVertexAttribArray(NULL, posAttribIdx, vbo, posArrayIdx); FAIL_ON_ERROR(errCode, "BindVertexAttribArray"); errCode = context->BindVertexAttribArray(NULL, colAttribIdx, vbo, colArrayIdx); FAIL_ON_ERROR(errCode, "BindVertexAttribArray"); errCode = context->BindVertexAttribArray(NULL, normalAttribIdx, vbo, normalArrayIdx); FAIL_ON_ERROR(errCode, "BindVertexAttribArray");

Step 4: Set Up The Light

A light needs to be added to the scene, or the cube won’t be visible. This is fairly easy. Add the following just below the code that define’s the camera position (search for CAM_STARTPOS):

// The light's position #define LIGHT_STARTPOS_X (CAM_STARTPOS_X + 100) #define LIGHT_STARTPOS_Y (CAM_STARTPOS_Y + 25) #define LIGHT_STARTPOS_Z (CAM_STARTPOS_Z)

Now add a lightPos variable to main(). I recommend putting it just below the projectionMatrix declaration:

kmVec3 lightPos = {LIGHT_STARTPOS_X, LIGHT_STARTPOS_Y, LIGHT_STARTPOS_Z}; // The light's position

Step 5: Calculate the Shader Constants

We’re almost there. The next task is to pass the parameters to the GPU as shader uniforms (constants) so it can calculate the lighting.

Both the vertex and fragment shaders have uniform variables this time. Fortunately, Warp3D Nova allows us to store data for multiple shaders in one Data Buffer Object (DBO), and that makes managing the data easier.

First step: create a DBO that stores two buffers instead of one; one buffer for each shader.

// This contains the vertex shader's constant data

// NOTE: Using W3DN_STREAM_DRAW, because we will be updating the matrices

// every time

dbo = context->CreateDataBufferObjectTags(&errCode,

sizeof(VertexShaderData), W3DN_STREAM_DRAW, 2, TAG_DONE);

if(!dbo) {

printf("context->CreateDataBufferObjectTags() failed (%u): %sn",

errCode, IW3DNova->W3DN_GetErrorString(errCode));

retCode = 10;

goto CLEANUP;

}

Next, we’re going to perform a quick safety check to make sure that our C code and shader code’s data buffer formats are in sync. This simply checks if the size of the data buffers match. The code already checks this for the vertex shader; all that’s required is a similar check for the fragment shader:

// Quick check to make sure that the fragment shader data struct matches the shader

uint64 expectedFragShaderDataSize = sizeof(FragmentShaderData);

uint64 fragShaderDataSize = context->ShaderGetTotalStorage(fragShader);

if(fragShaderDataSize != expectedFragShaderDataSize) {

printf("ERROR: Expected the fragment shader's data size to be %lu bytes, but "

"it was %lu instead. Did you update the shader without updating FragmentShaderData?",

(unsigned long)expectedFragShaderDataSize, (unsigned long)fragShaderDataSize);

retCode = 10;

goto CLEANUP;

}

This safety check will fail right now because we haven’t updated the data structures (and FragmentShaderData doesn’t even exist yet). Don’t worry, we take care of that below.

One more thing to do first, though; the shader data buffers need to be bound to their respective shaders. Here’s the full code to achieve this:

// Binding the DBO errCode = context->BindShaderDataBuffer(NULL, W3DNST_VERTEX, dbo, vertShaderDataIdx); FAIL_ON_ERROR(errCode, "BindShaderDataBuffer"); errCode = context->BindShaderDataBuffer(NULL, W3DNST_FRAGMENT, dbo, fragShaderDataIdx); FAIL_ON_ERROR(errCode, "BindShaderDataBuffer");

NOTE: The original code already binds the vertex shader data.

VertexShaderData

First up, find the VertexShaderData structure, and update it to match the uniform variables in the updated shader:

/** The structure for the vertex shader's uniform data.

* IMPORTANT: Keep this synchronized with the shader!

*/

typedef struct VertexShaderData_s {

kmMat4 projMatrix; // ProjectionMatrix

kmMat4 mvMatrix; // ModelViewMatrix

kmMat4 normalMatrix; // NormalMatrix

kmVec3 lightPos; // LightPosition

} VertexShaderData;

Next, find the code that generates the vertex uniforms (search for “Build the MVP matrix”), and replace it with the following:

// Build the matrices

kmMat4 mvMat;

kmMat3 normalRot;

kmMat4 normalMat;

kmMat4Multiply(&mvMat, &viewMat, &modelMat);

// Normal matrix is the inverse transpose of the model-view matrix's 3x3 rotation part

kmMat4ExtractRotationMat3(&mvMat, &normalRot);

kmMat3Inverse(&normalRot, &normalRot);

kmMat3Transpose(&normalRot, &normalRot);

kmMat4AssignMat3(&normalMat, &normalRot);

// Update the shader's constant data

W3DN_BufferLock *bufferLock = context->DBOLock(&errCode, dbo, 0, 0);

if(!bufferLock) {

printf("context->VBOLock() failed (%u): %sn",

errCode, IW3DNova->W3DN_GetErrorString(errCode));

retCode = 10;

goto CLEANUP;

}

VertexShaderData *shaderData = (VertexShaderData*)bufferLock->buffer;

shaderData->projMatrix = projectionMat;

shaderData->mvMatrix = mvMat;

shaderData->normalMatrix = normalMat;

shaderData->lightPos = lightPos;

context->BufferUnlock(bufferLock, 0, sizeof(VertexShaderData));

Pay close attention to how the normal matrix is calculated in the code above. It’s the inverse (kmMat3Inverse()) transpose (kmMat3Transpose()) of the MV matrix’s rotation part (the top left 3×3 sub-matrix, as extracted by kmMat4ExtractRotationMat3()). If you want the gory details, then here‘s a good article explaining why (link).

FragmentShaderData

Create a FragmentShaderData structure matching the shader’s uniform data:

/** The structure for the fragment shader's uniform data.

* IMPORTANT: Keep this synchronized with the shader!

*/

typedef struct FragmentShaderData_s {

kmVec4 ambientCol;

kmVec4 diffuseCol;

kmVec4 specularCol;

float shininess;

} FragmentShaderData;

I recommend putting it directly below the VertexShaderData declaration.

The DBO needs to be bigger to store data for both shaders, so update the size passed to CreateDataBufferObject():

dbo = context->CreateDataBufferObjectTags(&errCode, sizeof(VertexShaderData) + sizeof(FragmentShaderData), W3DN_STREAM_DRAW, 2, TAG_DONE);

The total buffer size is now sizeof(VertexShaderData) + sizeof(FragmentShaderData).

IMPORTANT: Make sure you get the sizes of your buffers right. Make them too small and you’ll end up writing to memory you don’t own. Bad things happen when you do that…

As with the vertex shader, the fragment’s shader data also needs to be written into the DBO. We could do both at the same time, but with only one cube the fragment shader’s data remains constant. So, let’s do this separately. It’ll allow me to show you how to update parts of a DBO (or VBO):

// Writing the fragment shader data here because they stay constant (only one material & light are used)

// NOTE: These values are combined light & material values

W3DN_BufferLock *bufferLock = context->DBOLock(&errCode, dbo, 0, 0);

if(!bufferLock) {

printf("context->VBOLock() failed (%u): %sn",

errCode, IW3DNova->W3DN_GetErrorString(errCode));

retCode = 10;

goto CLEANUP;

}

FragmentShaderData *shaderData =

(FragmentShaderData*)((uint8*)bufferLock->buffer + fragShaderDataOffset);

shaderData->ambientCol = (kmVec4){0.025f, 0.025f, 0.025f, 1.0f};

shaderData->diffuseCol = (kmVec4){1.2f, 1.2f, 1.2f, 1.0f};

shaderData->specularCol = (kmVec4){1.5f, 1.5f, 1.5f, 1.0f};

shaderData->shininess = 400.0f;

context->BufferUnlock(bufferLock, fragShaderDataOffset, sizeof(FragmentShaderData));

The key to updating only part of the DBO is in the BufferUnlock() call. Passing fragShaderDataOffset and sizeof(FragmentShaderData) tells Warp3D Nova to only update the part containing the fragment shader’s data.

Conclusion

Phew! That was a lot of work. We implemented phong shading in shaders, and updated both the vertex array and render code to give those shaders the extra data that they needed.

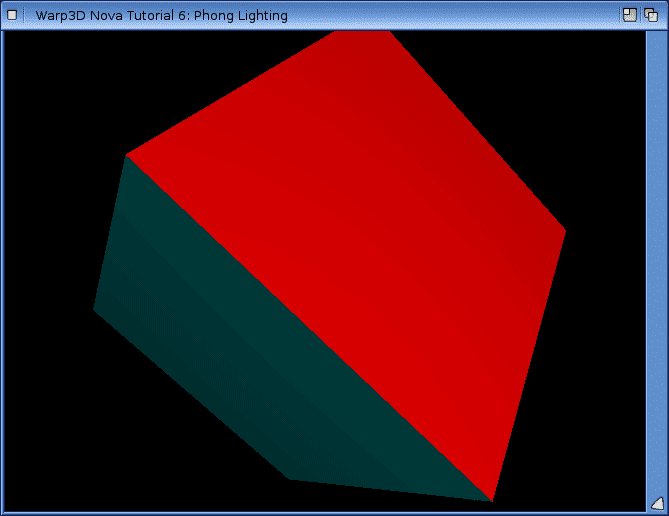

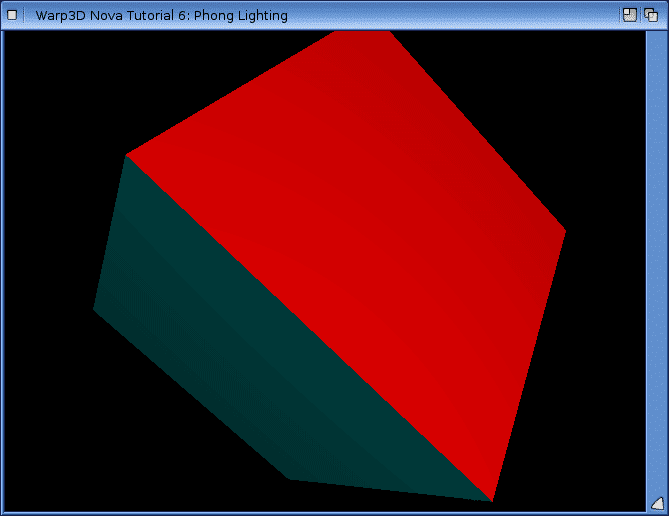

You now have a program that renders 3D with lighting. Real progress! Here’s a screenshot of what it looks like.

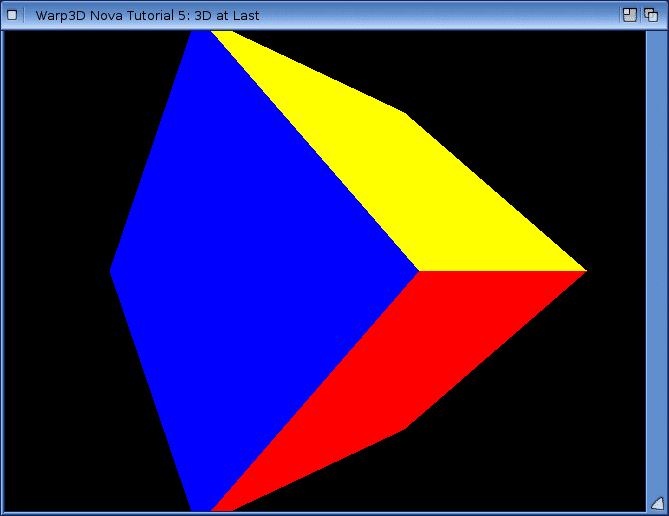

Compare that to the flat looking cube from last time:

It looks more realistic with lighting, so a definite improvement.

You can download the source code, here: W3DNovaTutorial6.lha

The downloadable source code also animates the cube so that the lighting is more obvious. I was going to cover animation here, but decided that would be too much. So that’ll be covered next time.

Got comments or questions? Contact me here or post them in the comments section below.